Introduction

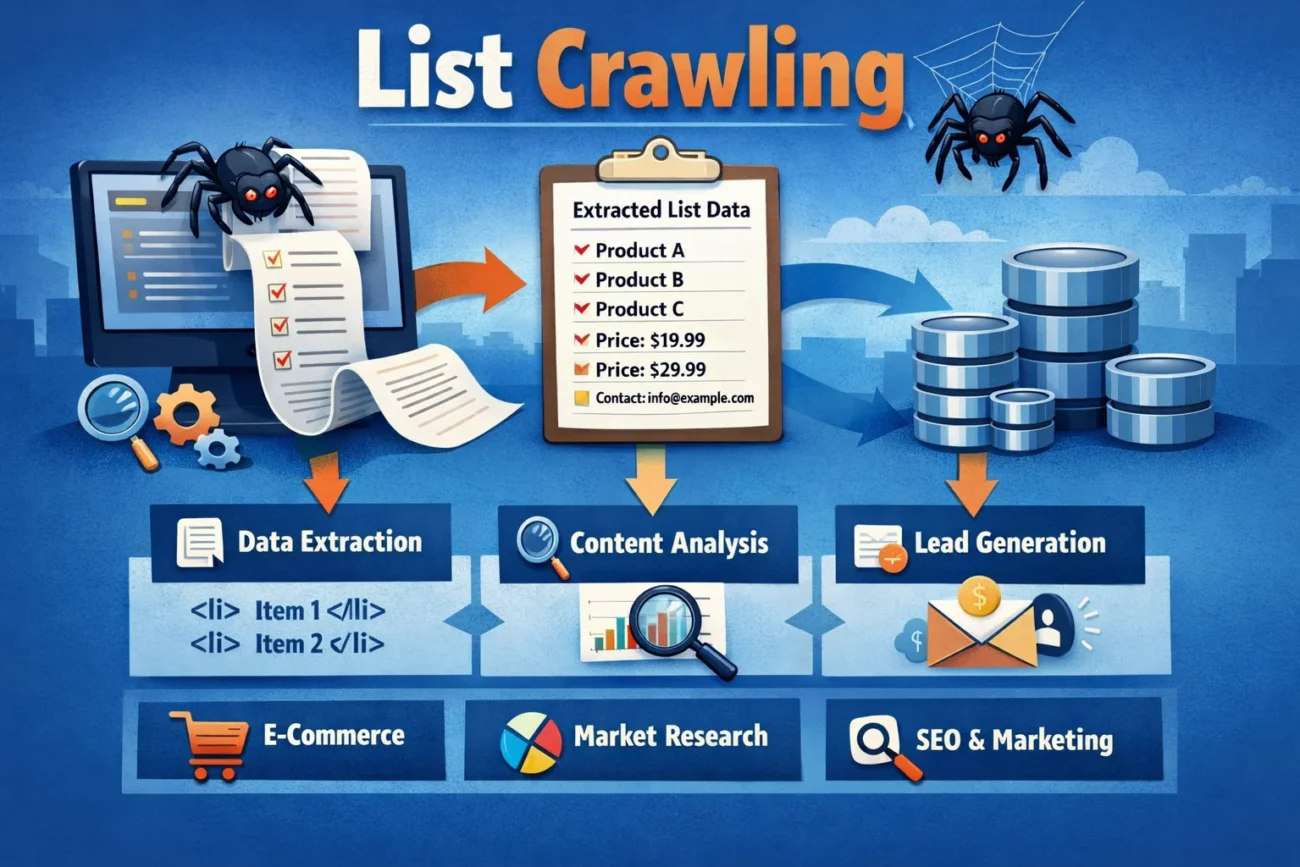

In the modern era of data-driven decision-making, List Crawling has emerged as a crucial technique for businesses, researchers, and tech enthusiasts alike. At its core, List Crawling refers to the automated process of navigating, extracting, and compiling data from structured lists available on websites, databases, or applications.

Unlike traditional web scraping, which may collect random pieces of information from various parts of a website, List Crawling focuses on systematically traversing well-defined lists to extract consistent and organized datasets. This approach ensures accuracy, speed, and efficiency, making it invaluable in a wide range of applications from market research to competitive analysis.

The Mechanics Behind List Crawling

To truly appreciate List Crawling, one must understand how it operates. The process typically begins with the identification of the target list. This could be anything from a product catalog on an e-commerce platform to a directory of businesses or professionals. Once the list is identified, a crawler—a type of automated bot—is deployed to traverse each entry systematically.

Modern List Crawling tools often integrate intelligent parsing algorithms that allow them to recognize patterns, handle pagination, and even bypass minor website restrictions to ensure uninterrupted data extraction. This is particularly useful when lists are spread across multiple pages or dynamically generated, as the crawler can simulate user actions like scrolling or clicking “next” buttons to gather complete datasets efficiently.

Applications of List Crawling in Business

Businesses have increasingly leveraged List Crawling to gain competitive advantages. One of the primary applications is in market intelligence. Companies can crawl competitor websites to monitor product offerings, pricing strategies, stock availability, and promotional campaigns. This data, when analyzed, provides actionable insights into market trends and helps businesses make informed strategic decisions.

Another application is in lead generation. By crawling directories of professionals, companies can extract contact details and build comprehensive sales or outreach lists. This eliminates the time-consuming manual process of gathering leads and significantly improves marketing efficiency.

List Crawling in Research and Academia

The benefits of List Crawling are not limited to businesses alone. Academic researchers and data scientists have found this technique invaluable for large-scale data collection. For instance, social scientists studying online consumer behavior can crawl user-generated lists such as reviews, rankings, or forum discussions to understand trends and preferences.

In the field of machine learning, List Crawling serves as a method for gathering high-quality training datasets. Large volumes of structured data are essential for training models that require accuracy and consistency, such as recommendation engines, predictive analytics models, or natural language processing systems.

Technical Challenges in List Crawling

While List Crawling offers significant advantages, it is not without challenges. One of the primary technical hurdles is dealing with anti-bot mechanisms implemented by websites. Many platforms deploy rate-limiting, CAPTCHA systems, or IP blocking to prevent automated data collection. Effective List Crawling requires sophisticated techniques to navigate these obstacles, such as rotating IP addresses, using headless browsers, or employing AI-driven pattern recognition to mimic human behavior.

Another challenge is handling dynamic content. Some lists are not static but are generated in real-time using JavaScript or API calls. Crawlers must be capable of interpreting or simulating these dynamic processes to ensure complete data extraction. Additionally, maintaining data accuracy and dealing with inconsistencies across multiple sources can be complex, especially when lists are frequently updated or structured differently.

Best Practices for Effective List Crawling

To maximize the effectiveness of List Crawling, several best practices should be followed. Firstly, it is essential to define clear objectives before initiating a crawl. Knowing exactly what data is required and how it will be used prevents unnecessary data collection and reduces computational load.

Secondly, respecting website terms of service and legal boundaries is crucial. Unauthorized crawling can lead to legal issues, IP bans, or reputational damage. Ethical List Crawling involves seeking permission when necessary, respecting robots.txt files, and avoiding excessive server requests that could disrupt a website’s functionality.

Thirdly, implementing data validation and cleaning processes ensures the extracted data is accurate, consistent, and ready for analysis. This includes removing duplicates, standardizing formats, and verifying entries against authoritative sources when possible.

Finally, scalability should be considered. As datasets grow, crawlers must handle increased volumes efficiently. Using distributed crawling systems or cloud-based solutions can help manage large-scale operations without compromising speed or accuracy.

Tools and Technologies for List Crawling

Several tools and technologies have been developed specifically to facilitate List Crawling. Popular programming languages like Python offer libraries such as BeautifulSoup, Scrapy, and Selenium, which provide powerful frameworks for parsing and automating data extraction. For more advanced use cases, headless browsers like Puppeteer or Playwright allow crawlers to interact with dynamic web content seamlessly.

Specialized list-crawling platforms provide user-friendly interfaces for non-technical users. These platforms often include drag-and-drop functionality, automated scheduling, and integrated data storage solutions, enabling efficient collection and management of large datasets without requiring deep programming knowledge.

Advanced Strategies to Optimize List Crawling Performance

As organizations increasingly rely on List Crawling for data extraction, optimizing performance becomes a critical priority. Efficient crawling not only saves time but also reduces server load, minimizes costs, and improves data accuracy. One effective strategy is implementing asynchronous crawling, where multiple requests are processed simultaneously rather than sequentially.

Another important optimization technique is caching. By storing previously crawled data, crawlers can avoid redundant requests and focus only on updated or new entries. Incremental crawling further enhances efficiency by identifying changes in lists and updating only the modified data instead of reprocessing the entire dataset.

Ethical and Legal Considerations in List Crawling

Ethics and legality are central to responsible List Crawling. While the technique itself is neutral, its applications can raise concerns. Extracting personal data without consent, violating website terms, or redistributing proprietary information can have serious legal implications under laws like GDPR, CCPA, or the Computer Fraud and Abuse Act.

To navigate this landscape, it is recommended to focus on publicly available and non-sensitive data. Additionally, organizations should implement internal policies for data governance, ensuring that List Crawling activities are transparent, accountable, and compliant with relevant regulations. Ethical practices not only protect businesses from legal repercussions but also enhance credibility and trust among users and stakeholders.

Handling Pagination and Deep List Structures

One of the most common challenges in List Crawling is dealing with pagination and deeply nested lists. Many websites divide their data across multiple pages to enhance user experience and reduce load times. For crawlers, however, this means additional steps are required to navigate through every page and ensure no data is missed.

Effective pagination handling involves identifying the navigation pattern used by the website. Some sites use numbered pages, while others rely on “load more” buttons or infinite scrolling. Advanced crawlers can simulate user interactions such as clicking buttons or scrolling down the page to trigger the loading of additional data. By doing so, they ensure comprehensive data extraction across all pages.

Integrating List Crawling with Data Analytics

The true value of List Crawling lies in its integration with data analytics tools. Extracted data becomes significantly more valuable when it is analyzed to generate insights and drive decision-making. By feeding crawled data into analytics platforms, organizations can identify trends, patterns, and opportunities that would otherwise remain hidden.

For instance, businesses can analyze pricing data from competitors to identify optimal pricing strategies. Similarly, customer reviews collected through List Crawling can be analyzed using sentiment analysis techniques to understand customer preferences and improve products or services. In the financial sector, crawled data can be used to monitor market trends and predict future movements.

The Role of APIs in Modern List Crawling

In recent years, APIs have become an important component of List Crawling. Many websites provide APIs that allow developers to access structured data directly, eliminating the need for traditional scraping methods. Using APIs is often more efficient, reliable, and compliant with website policies.

APIs provide data in standardized formats such as JSON or XML, making it easier to parse and process. They also offer features such as filtering, sorting, and pagination, which simplify data extraction. However, APIs may have limitations such as rate limits or restricted access, requiring careful management to ensure optimal performance.

The Future of List Crawling

The future of List Crawling is closely linked to advancements in artificial intelligence and automation. AI-powered crawlers are becoming increasingly adept at recognizing patterns, adapting to website changes, and making real-time decisions about which data to extract. This reduces human intervention and increases the accuracy and efficiency of data collection.

Integration with cloud computing and big data platforms will allow crawlers to handle unprecedented volumes of information. This opens new possibilities for industries such as finance, healthcare, and e-commerce, where timely access to large datasets can drive innovation, improve decision-making, and create competitive advantages.

Conclusion

List Crawling has evolved from a technical curiosity into a strategic tool for businesses, researchers, and data enthusiasts. Its ability to systematically collect structured data from diverse sources offers unparalleled opportunities for market intelligence, research, lead generation, and data-driven innovation. By understanding the mechanics, challenges, and best practices of List Crawling, organizations can harness its potential while ensuring ethical and legal compliance.

As technology continues to advance, mastering List Crawling will be a key differentiator for those seeking to leverage information effectively, stay ahead of competitors, and drive meaningful insights from the vast sea of digital data. Embracing this approach today ensures that tomorrow’s decisions are informed, precise, and strategically sound.